Photographers around the world want to know which format is better? Is it 35mm Full Frame, the traditional size sensor that is most similar to old fashioned 35mm film, or is it one of the many crop formats? Read on to find out.

If you’re wondering what a crop sensor even is you’re not alone. Many people are confused by the naming conventions around sensor size. The first thing to know is that in the world of digital photography a crop sensor is usually a sensor that is smaller than a 35mm sensor. Sure, there are sensors larger than 35mm, so doesn’t that make 35mm kind of a crop size sensor? Well, in actual fact, yes, 35mm would traditionally be considered a crop format compared to the origins of photography. Looking at the ancient history of photography formats it all began with large format. But just about nobody shoots large format anymore and hardly anyone shoots medium format. 35mm became the standard in the 20th century simply by being the most readily available film size that just about anyone could afford to buy.

And if we’re talking about Hollywood movies it might make more sense calling 35mm “Full Frame”. Just about every Hollywood movie you’ve ever seen is shot on 35mm either in whole or at least in part. And that is true whether they used film or digital.

The price of film for a Hollywood movie was one of the largest hurdles any filmmaker would have to overcome in getting a film made. The film itself could cost millions of dollars. Naturally, anyone on a budget would have to use a smaller and cheaper format like 16mm or even 8mm film. So 16mm film would be a crop format of the film days of Hollywood moviemaking with 35mm being considered “Full Frame” probably because you need a “Full Wallet” to shoot 35mm film.

Despite this, and probably just to confuse everyone, some filmmakers did insist on using even larger sized film. Who knows, maybe some day they’ll come up with a reason to make IMAX sized camera sensors. A starting price of 1 million dollars would be a deal.

Basically 35mm is called Full Frame because everybody was using it. Amateurs used it, professionals used it, and Hollywood used it on blockbuster films that made millions of dollars. When digital came out camera companies naturally stuck with what they knew best. They made 35mm digital sensors and kept making lenses that covered the 35mm frame size. End of the day, 35mm was kind of shoe-horned in to the modern age. It made sense because it’s what people knew and it’s actually kind of nice because it means that lots of lenses and such still work basically the same with 35mm sensors as they did with 35mm film.

35mm sensors seemed to be a miracle of technology. For one, the camera’s weren’t hugely more expensive than their film counterparts, and that’s not including the never-ending cost of buying and developing the film. For all intents and purposes 35mm digital cameras were basically taking pictures for free compared to the costs and effort it used to require.

But it still wasn’t cheap enough apparently! The market exclaims, why can’t we have our cameras for even less money!! We don’t want free pictures, we want less than free pictures! SHEESH.

So the camera companies came up with a solution and those are the “crop sensor” cameras. The main reason for crop sensors was to make manufacturing the digital sensors cheaper and thus allow camera companies to sell the cameras for even less money than before.

On of the first of these formats is called APS-C. APS-C is actually also a holdout from the days of film. Of course APS-C film existed for the exact same reason that APS-C sensors exist. To sell film for less money.

But that wasn’t cheap enough so they decided to go even smaller and create a new crop format called Micro Four Thirds. This was the first fully digital sensor standard actually as 35mm and APS-C are based on previous film standards.

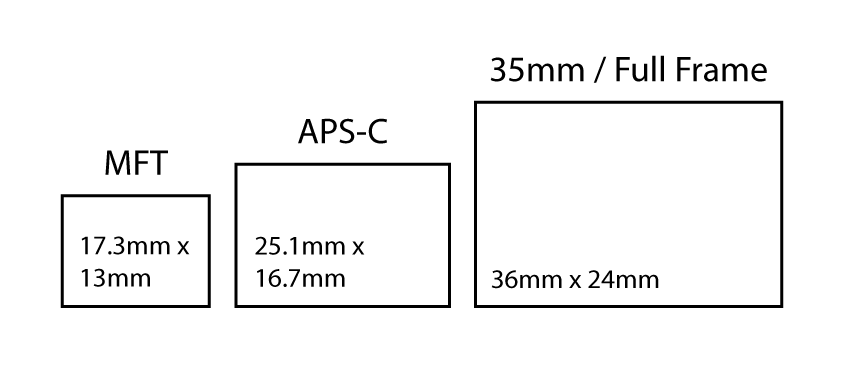

To get an idea of how these 3 common sensor sizes compare check out the image below:

So back to the original question at hand! Which is better? That’s one of those questions nobody can really say which is better for a variety of reasons but certainly APS-C and MFT are cheaper than 35mm and crop sensor cameras tend to be smaller and lighter and have smaller and lighter lenses… But does all that equate to being better? Not really, and here’s why…

Light itself has a resolution limit, a limit that is actually not very small in the grand scheme of things as some modern camera sensors exceed the resolution limit of light. Sensor’s that exceed the light resolution limit use a technique called “pixel binning” which combine neighboring pixels together in an effort to avoid blurring in the image and also to increase the color accuracy in the final image.

While “pixel” binning is a good solution it has one major problem. It doesn’t increase the resolution in the final image. For this reason the smaller a sensor is, the smaller the resolution limit of the sensor is.

Unfortunately I can’t tell you what this limit is or which sensors are best in this regard because I don’t know anything about how those sensors are made. All I can say is that smaller sensors do currently tend to have worse performance due to the sheer number of pixels crammed onto most of these small sensors. In a way you can think of a sensor with too many pixels as being “overpopulated” with pixels. And if the light is food, then the sensors are not getting enough food to eat. There’s only so much light to go around which is why there is a resolution limit to sharpness and color accuracy.

For this reason a Full Frame sensor with 32 megapixels is going to be better performing than an APS-C sensor with 32 megapixels and that will be better than an MFT sensor with 32 megapixels. The one caveat is that this doesn’t always hold true across multiple generations. Newer generations of APS-C cameras will probably outperform older generations of Full Frame cameras in terms of noise and color accuracy. An example would be the original Canon 5D released in 2005. A modern APS-C sensor is likely to outperform a 2005 era Full Frame camera.

By reading the following you acknowledge that any profit made from this knowledge will be 100% directed to my bank account.

Full Frame will always have one advantage that so many people love to death and that is BOKEH! One of the irrevocable side effects of using a smaller sensor is that the bokeh of the lens is reduced. It might be hard to understand but essentially a lenses focal length is related to it’s image circle. While a lens isn’t technically related to a sensor in any specific way the size of the image circle does determine what sensor sizes the lens will work on. So in that regard there is a relationship. It may not make a lot of sense but the “50mm” lens on an iPhone isn’t actually a 50mm lens like what is on a 35mm ILC camera. It’s actually a much wider focal length than that.

You might be thinking, well, can’t you just shrink a DSLR lens down and put it on an iPhone? Uh, yeah, you can, and if you do, it won’t work on an iPhone like a DSLR 50mm lens anymore. But why you exclaim!! What about EQUIVALENCE!!! Contrary to popular belief of the equivalence theorists, shrinking down a DSLR Full Frame lens doesn’t change one key aspect: the fact that it is full frame. Lenses are continuous curves which means every point on the lens focuses light to the same point in the image circle. Whether the lens is iPhone sized or the size of a basketball, the light goes to the same point on the sensor. So, if a DSLR lens is shrunken down to work on an iPhone, it will still have a Full Frame sized image circle.

Getting back to the point at hand, the iPhone “50mm” isn’t really 50mm like a DSLR 50mm, it’s something much wider like 16mm that is cropped when compared to a 50mm DSLR lens. This is how sensor size relates to the focal length of the lens. Since the focal length on smaller sensors has to be wider it means the focus fall off of the lens is greatly reduced which means getting background separation is more difficult. The smaller the sensor gets the harder it gets to achieve background separation. Instead of the awesome bokeh one gets with a DSLR 50mm lens, the iPhone 50mm has just about 0 bokeh unless you enable “Portrait Mode”. Portrait mode is accomplished by analyzing the parallax differential between two of the lenses on the iPhone to construct a crude depth map which is then used to blur the image digitally.

Anymore questions? Direct them to my agent, and by the way, she’s been instructed to ignore all of you. Thanks for playing!

For the final conclusion I’ll refer to the following chart:

| Sensor Type | MFT | APS-C | Full Frame |

| Bokeh Quality | 2.5 | 4 | 5 |

| Image Quality/Resolution | 3 | 4 | 5 |

| Price | 5 | 4.5 | 4.25 |

| Total Score | 10.5 | 12 | 14.25 |

Bokeh is subjective but generally speaking having the ability to create more blur is better and it makes using a zoom lens nicer too. Matching the blur of FF zooms on an MFT sensor usually requires using the fastest available prime lenses on an MFT camera. It’s typically not possible for MFT to match FF primes matched with FF sensors.

Bottom line is that Full Frame is just edging out the competing formats. I think in the future price will be less of a concern for the cameras and the main cost penalty will be the lenses for full frame. Being completely honest I’m definitely penalizing Full Frame here perhaps when I shouldn’t be. It’s true there are some pricey Full Frame cameras but there are some MFT cameras that also top $3,000. I’m going by lowest possible price and in that regard MFT just barely edges out a price advantage. But to be perfectly clear there are some Full Frame cameras cheaper than some MFT camera’s so keep that in mind when you’re shopping around.

Sorry crop sensor advocates but you’re going to have to come up with a better argument for buying a smaller sensor size.